Premium content owners today own catalogs full of high-quality video, yet much of that value remains untapped. Hidden within every show, movie, or live stream are thousands of high-value moments such as segments, defining plays and emotional inflection points that rarely get surfaced, discovered, or monetized.

In a world shaped by short-form videos and micro-interactions, the unit of value has fundamentally shifted. Discovery now happens in moments across all platforms, and these moments are the true drivers of engagement, monetization, and audience retention. Yet most content ecosystems are still built around long-form assets, leaving the granular value they can offer invisible.

The Shorts Revolution is Here to Stay

While it’s true that long-form content has an audience, the growing popularity is in short-form. 69% of adults aged 16–24 watch short-form vertical video every single day. And 85% do so at least weekly. Moreover, nearly two-thirds of the global online population is swiping through TikTok, YouTube Shorts, and Instagram Reels daily, a higher penetration rate than broadcast TV or streaming services combined.

This shift isn’t a threat. It’s an opportunity.

Quickplay’s Smart Verticalizer: Turning Moments into Value at Scale

If moments are now the unit of discovery and monetization, the next challenge for premium content owners is determining how to extract, adapt, and activate those moments across every platform your audience uses.

This feels overwhelming because traditional infrastructure was never designed for this. It was built to deliver full-length content to a limited number of destinations. But today's audiences are fragmented across owned-and-operated apps, social platforms, FAST channels, syndication networks, and emerging commerce surfaces. Delivering content to one or two endpoints is no longer enough. Content must be continuously transformed and distributed wherever attention exists.

This is where Quickplay’s Content to Value Operating System delivers immense business value by transforming moments into ready-to-publish experiences.

AI Studio is a solution built on the Content to Value Operating System. It connects content intelligence and short-form publishing into one production-ready workflow, with Smart Verticalizer handling format adaptation at scale. Broadcasters, sports operators, and streamers use it to turn catalog moments into platform-ready content without adding headcount or rebuilding their stack.

AI Studio transforms traditional workflows by moving from title-based processing to moment-based intelligence, automatically identifying and enriching high-value segments within long-form content.

Smart Verticalizer brings these moments to life by transforming them into high-quality, short-form experiences optimized for modern platforms through intelligent, context-aware reframing.

The reality is not all verticalization is created equal. Naively cropping widescreen content to 9:16 creates more than visual defects—it breaks the narrative. Contextual elements like tickers and graphics are cut, multi-speaker dynamics are lost, and in sports, the core action can fall outside the frame during motion. What remains is content that is technically resized, but editorially compromised. For a consumer brand with hard-won audience trust, that kind of output can be measurably damaging.

Tier-1 operators don't have the luxury of publishing substandard content and calling it "good enough for social." Their brand standards are built on decades of producing high-quality content, and the tools they use to participate in the creator economy need to meet that same expectation of quality.

How Smart Verticalizer Works

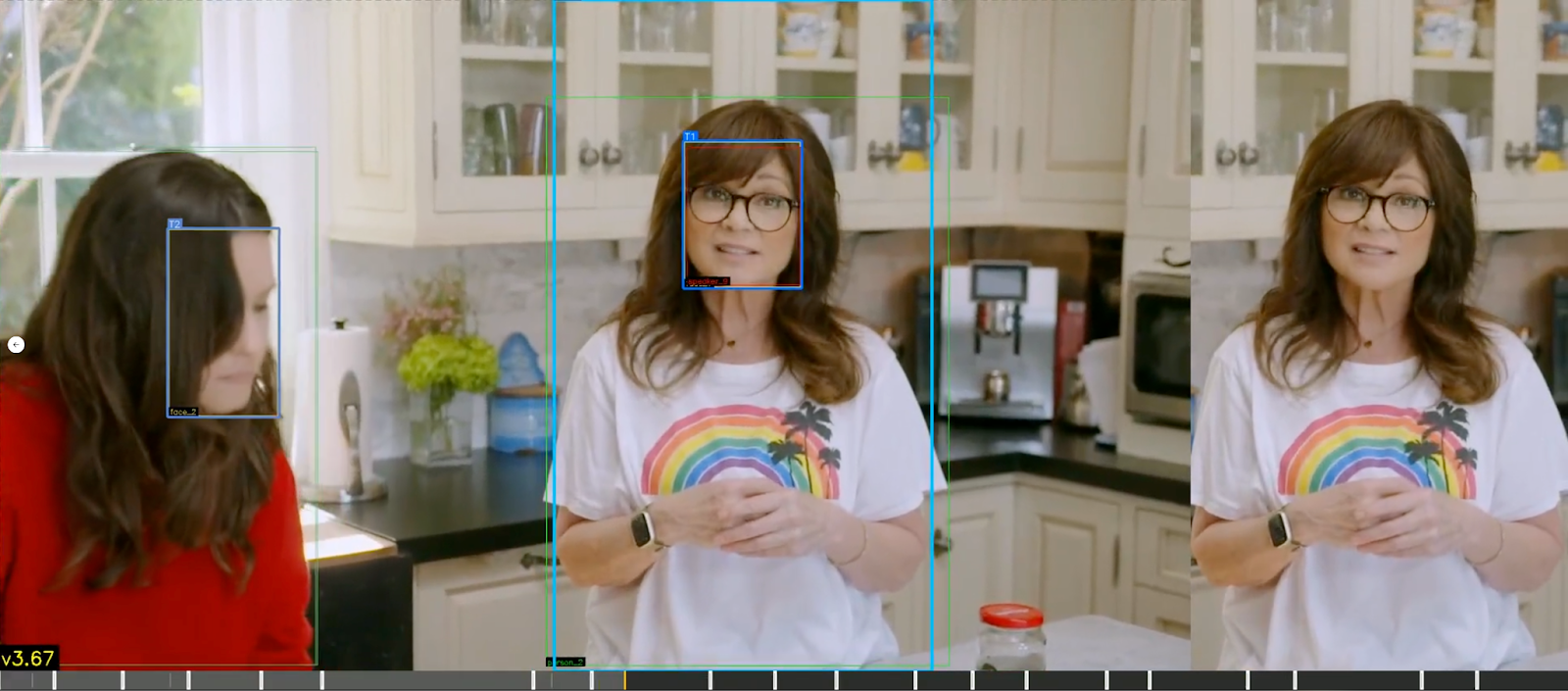

Unlike generic tools, Smart Verticalizer understands context. It recognizes that different types of content require fundamentally different treatment. A soccer match and a basketball game may both be sports, but they demand entirely different framing logic. A talk show, a reality TV show and a scripted drama may all be entertainment, but their visual languages are distinct. Smart Verticalizer adapts accordingly.

The process begins with AI Studio analyzing the source video or live feed and applying multimodal AI to segment it into meaningful scenes, essentially the building blocks of moments. Each scene is enriched with deep metadata of actors, characters, objects, dialogue, and narrative context, and subsequently encoded into vector embeddings for semantic understanding. This intelligence doesn't just describe content; it drives how each moment is selected, framed, and delivered.

Smart Verticalizer then executes a purpose-built verticalization pipeline that transforms each scene into broadcast-quality vertical output across five stages:

- Ingest & Analyze: Scene boundaries are detected and each scene is analysed identifying faces, speakers, persons, text, graphics, and activity type using AI.

- Subject Intelligence: Detected subjects are ranked by prominence and a primary follow target is assigned per frame. The scene is then divided into segments at subject transitions, ensuring the crop never jumps mid-segment.

- Reframing & Composition: The optimal layout strategy is selected for each segment—single-subject fill, split-screen, picture-in-picture, or letterbox—and the crop window is positioned precisely, accounting for headroom, gaze direction, and centering.

- Motion Smoothing: Smooth trajectories are fitted to crop positions across each segment, producing fluid, broadcast-quality camera movement with no jarring cuts or abrupt pans.

- Quality Scoring & Delivery: Every crop candidate is scored across subject visibility, motion quality, composition, and text preservation. The highest-scoring result is automatically selected, with plain-English rationale provided for editorial review. Output is serialized into a structured format compatible with FFmpeg and other standard rendering pipelines.

Each output is delivered in a standardized dynamic crop format that supports multiple rendering modes to meet different platform and workflow requirements, from frame-by-frame precision for VOD publishing, to smooth interpolated keyframes for live and near-live delivery, to static crop positions for lightweight distribution. All modes are compatible with standard compositing workflows and provide full support for blurred self-background fills, picture-in-picture compositions, and custom background assets.

Smart Verticalizer applies specialized reframing strategies, with AI, tailored to content type:

- Entertainment & Scripted: dynamic face tracking, scene-aware centering, and split-view compositions for dialogue-driven content

- News: intelligent ticker preservation using OCR and layout awareness, ensuring critical information remains intact

- Sports: motion-aware tracking, including ball trajectory prediction and athlete-focused framing for fast-paced action

- Animation: custom detection models for non-human characters, handling stylized visuals beyond traditional recognition systems

Every stage of the pipeline is tunable. Content operators can adjust framing parameters such as headroom, subject weighting, motion smoothness, minimum dwell time, layout preferences at the content-type level or per individual run. Scoring weights can be recalibrated to prioritize what matters most for a given format, and detection sensitivity can be scaled between accuracy and speed depending on throughput requirements. This means the system can be precisely configured for a premium sports rights holder with different needs than a news broadcaster or a scripted entertainment studio, without requiring foundational engineering changes.

Individual outputs are evaluated against a comprehensive quality model that factors in subject visibility, motion smoothness, composition, and text integrity. The system automatically selects the best result, while providing a transparent, plain-English rationale for editorial review. Human editors remain in control, with the ability to refine before publishing. These interactions continuously improve the system through feedback loops, making it more precise over time.

The Future is Moments

Competing in the era of short-form requires building an intelligent production layer that understands content at a granular level, makes editorially sound decisions, and scales across entire catalogs. The winners won't be those with the most content; they'll be the ones who can unlock the most value from every moment.

Let’s win together. Email hello@quickplay.com to set up a meeting.